- Blog

- Youtube in text citation apa

- Nier automata walkthrough c

- Programi za crtanje kuca download

- Actress skye

- 2pac california love

- Abby fine reader mac download

- Henry ford diagnostic ultrasound department

- Eheim 2260 gph

- Netflix font download

- How to play breezin george benson on guitar

- Sonic fan games download

- Tv tropes total annihilation kingdoms

- Gecko driver firefox

- Fred and siri text to speech

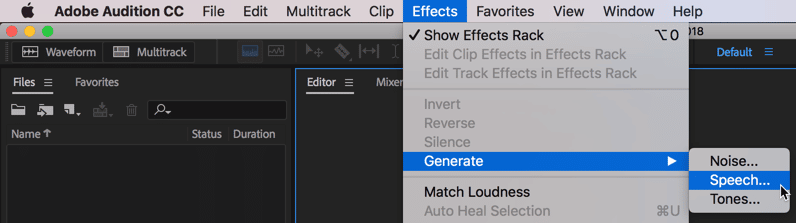

(Image credit: Apple) The current state of ASRīuilding on decades of evolution – and in response to rising user expectations – speech recognition technology has made further leaps over the past half-decade. This forward momentum was also largely driven by emergence and increased availability of low-cost computing and massive algorithmic advances. In the early 2010s, the emergence of deep learning, Recurrent Neural Networks (RNNs), and Long short-term memory (LSTM), led to a hyperspace jump in the capabilities of ASR tech. And it had Google's processing clout to drive the quality forwards.Īpple (Siri) and Microsoft (Cortana) followed just to stay in the game. But it also recycled the speech data of millions of networked users as training material for machine learning. Google Voice Search (2007) delivered voice recognition tech to the masses.

Such was the opportunity spotted by Mike Cohen, who joined Google to launch the company's speech tech efforts in 2004. Personal computing and the ubiquitous network created new angles for innovation. VRCP now handles around 1.2 billion voice transactions each year.īut most of the work on speech recognition in the 1990s took place under the hood. In 1992, AT&T introduced Bell Labs’ Voice Recognition Call Processing (VRCP) service. Until the launch of Dragon Naturally Speaking in 1997, users still needed to pause between every word. It cost $9,000 - roughly $18,890 in 2021 accounting for inflation. In 1990, Dragon Dictate launched as the first commercial speech recognition software. Statistical analysis was now driving the evolution of ASR technology. Rather than exhaustively studying how people listen to and understand speech, we wanted to find the natural way for the machine to do it.” “After all, if a machine has to move, it does it with wheels-not by walking. “We thought it was wrong to ask a machine to emulate people,” recalls IBM’s speech recognition innovator Fred Jelinek. However, the system was still too unwieldy for commercial use. Properly trained, Tangora could recognize and type 20,000 words in English. Indeed, in the 1980s, the first viable use cases for speech-to-text tools emerged with IBM's experimental transcription system, Tangora. This probabilistic method drove the development of ASR in the 1980s. HARPY was among the first to make use of Hidden Markov Models (HMM). Like a three-year-old, speech recognition was now charming and had potential – but you wouldn’t want it in the office. HARPY recognized sentences from a vocabulary of 1,011 words, giving the system the power of the average three-year-old. The fruits of this research included the HARPY Speech Recognition System from Carnegie Mellon. In the 1970s, The Department of Defense ( DARPA) funded the Speech Understanding Research (SUR) program. It's one thing for a computer to understand a small range of numbers (i.e., 0-9), but Kyoto University's breakthrough was to 'segment' a line of speech so the tech could go to work on a range of spoken sounds.

Meanwhile, Japanese labs were developing vowel and phoneme recognizers and the first speech segmenter. IBM followed in 1962 with the Shoebox, which recognized numbers and simple math terms.